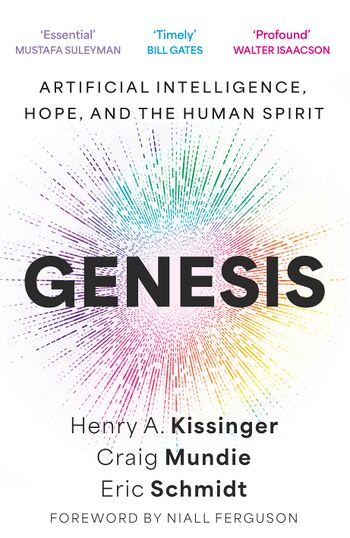

Genesis: Artificial Intelligence, Hope, and the Human Spirit

| Book Title | Genesis: Artificial Intelligence, Hope, and the Human Spirit |

|---|---|

| Subject | New World Order |

| Download | Download Link |

Copyright © 2024 by the Estate of Henry A. Kissinger, Craig Mundie, and Eric Schmidt

Foreword copyright © 2024 by Niall Ferguson

Hachette Book Group supports the right to free expression and the value of copyright. The purpose of copyright is to encourage writers and artists to produce the creative works that enrich our culture. The scanning, uploading, and distribution of this book without permission is a theft of the authors’ intellectual property. If you would like permission to use material from the book (other than for review purposes), please contact permissions@hbgusa.com. Thank you for your support of the authors’ rights.

Little, Brown and Company Hachette Book Group 1290 Avenue of the Americas, New York, NY 10104 littlebrown.com First Edition: November 2024 Little, Brown and Company is a division of Hachette Book Group, Inc. The Little, Brown name and logo are trademarks of Hachette Book Group, Inc.

The publisher is not responsible for websites (or their content) that are not owned by the publisher. The Hachette Speakers Bureau provides a wide range of authors for speaking events. To find out more, go to hachettespeakersbureau.com or email hachettespeakers@hbgusa.com.

Little, Brown and Company books may be purchased in bulk for business, educational, or promotional use. For information, please contact your local bookseller or the Hachette Book Group SpecialMarkets Department at special.markets@hbgusa.com.

Print book interior design by Bart Dawson ISBN 9780316581325 Library of Congress Control Number: 2024945132 E3-20240926-JV-NF-ORICONTENTS

Cover Title Page Copyright Dedication Foreword—Niall Ferguson In Memoriam: Henry A. Kissinger Introduction PART I: IN THE BEGINNING CHAPTER 1: Discovery CHAPTER 2: The Brain CHAPTER 3: Reality PART II: THE FOUR BRANCHES CHAPTER 4: Politics CHAPTER 5: Security CHAPTER 6: Prosperity CHAPTER 7: Science PART III: THE TREE OF LIFE CHAPTER 8: Strategy

Conclusion

Acknowledgments